Hello,

I’ve got an IPFire system at home, on which i use LVM on an additional disk that holds my Samba shares.

Today i ran the update to »core175«. After reboot the system wouldn’t come up again.

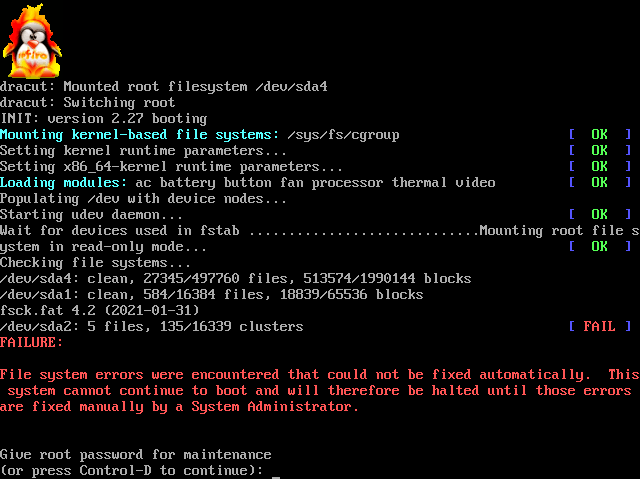

The Wait for devices used in fstab ... point would take quite long, finally it would fail as shown in the picture.

I commented out the LVM Volumes in fstab, and the system started again.

blkid would recognize my disk as LVM2_member, but omit the LVs.

vgdisplay would show the expected output though.

It appears that the LVs are »NOT available« (lvdisplay) or »inactive« (lvscan):

[root@ipfire ~]# lvdisplay

--- Logical volume ---

LV Path /dev/vg0/lv1

LV Name lv1

VG Name vg0

LV UUID INZqC7-JaQq-lCww-Ec2G-nLWX-kYvZ-hYxth2

LV Write Access read/write

LV Creation host, time ipfire.localdomain, 2023-06-29 14:10:26 +0200

LV Status NOT available

LV Size 1.00 GiB

Current LE 256

Segments 1

Allocation inherit

Read ahead sectors auto

--- Logical volume ---

LV Path /dev/vg0/lv2

LV Name lv2

VG Name vg0

LV UUID YNaISQ-oNRf-cZsd-APaM-IVRe-W8i0-7p9V1z

LV Write Access read/write

LV Creation host, time ipfire.localdomain, 2023-06-29 14:13:30 +0200

LV Status NOT available

LV Size 1020.00 MiB

Current LE 255

Segments 1

Allocation inherit

Read ahead sectors auto

[root@ipfire ~]# lvscan

inactive '/dev/vg0/lv1' [1.00 GiB] inherit

inactive '/dev/vg0/lv2' [1020.00 MiB] inherit

[root@ipfire ~]#

vgchange --activate y vg0 would activate the Volumes, in order that they are usable again.

Is that behaviour expected?

What could be done, to activate the LVs at boot time again?

Regards

Matthias