Hi!

I’m trying to install ipfire in hyper-v gen 2 vm, 4 core,2048 gb ram, and stuck at language selection- UI simply not responding to keyboard. What can I do to install ipfire?

Hi, try hyper-v gen 1. Integration features won’t work anyway and gen 2 requires secure boot which is not supported by IPFire - as far as I know. Stay with an even number of cores, minimum 2.

– Simulacron

integration features could be included as modules.

Gen2 does not require secure boot. I had disabled secure boot in vm properties, but got stuck at language selection.

I need gen2 beause it offers better performance. I need 10-25gb router/firewall. Currently using pfsense, but need to migrate it to something else, since hyper-v net drivers for bsd are buggy.

Just for me, what is the difference between gen 1 and 2?

Sorry don’t know the link in other languages

Gen 2 use synthetics devices, that provides better offload capabilities, gen 1 use emulated legacy adapters in guest. That’s why it’s importand to use them, if you want get good performance in hyper-v vm.

You could read this article to get brief understanding of difference between them.

And actually it’s strange for me why ipfire can’t be installed on gen 2, since all hyper-v synthetic drivers are included in main linux kernel repo, I had not any problems with installation of any other distro(debian,ubuntu,centos,gentoo)

As far as I know we support Hyper-V with synthetic drivers for many many years.

If you will be able to get 25GBit/s through the hypervisor - I do not know…

Yep, linux kernel support it by default, but I can’t install ipfire on gen2, simply because getting stuck at Language Selection -vm does not respond to any keybord button

Could it be that hyperv_keyboard module missed in initramfs?

Haven’t had this problem with language selection with hyper-v gen 1. Maybe I can give it a try in the near future with a test install on an gen 2.

But I also doubt that you’ll get more than 10G throughput. The virtual switch is a 10G device. What kind of hardware are your interfaces that you expect to reach up to 25G?

Virtual switch is not an 10g device. virtual swithc is a device with bandwidth that depends from hypervisor ethernet card bandwidth, so in my case up to 25gbps

I’m using 2 marvell fastlinq 41000 (QLogic FastLinQ QL41212H 25GbE ) Adapters on each hypervison and switch embedded teaming.

https://www.marvell.com/documents/8l3wqfzcft2xsux5pgbj/

and mellanox sn2010 switches

Thx for the info.

Don’t know in a rush where I took the 10G for the virtual switch from. Maybe from a book here on my shelf but I’ll not skim for that now. So I can’t proove. Guess, you’re right. But have still in mind that pure internal communication is running as 10G, also on a machine with only physical 1G adapter (and indeed it does, tested with iperf on my test-system which has only some 1G nics)

Also I found on the web that Hyper-V recently learned to show the real bandwidth for the adapter in the VM whereas it was 10G in the past whatever the real adapter performs.

There isn’t fixed internal bandwidth.

I’m using net for HCI also. My hypervisros for storage net uses 4 virtual adapters on top of switch embedded teaming, and could push practically 100 gbps over 4 links with jumbo frames(pnic(1-2-3-4)->vswitch->host vnic(1-2-3-4)).

So support of gen2 would be big step forward to big ipfire deployments, since there are not so much alternatives. Vyos - harder to support, pfsense/opnsense(really easy to implement, but I had faced with 10gbps wall in hyper-v).

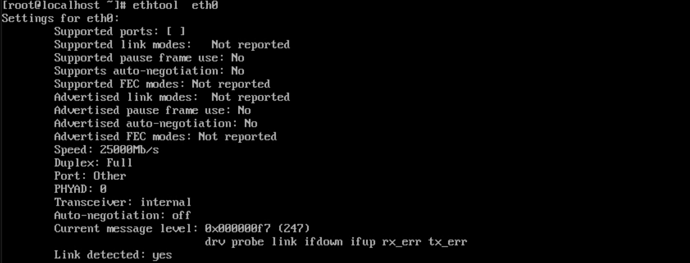

That’s screenshot from test centos 8 installation in hyper-v guest.

Had found this, are this modules included?:

Prepare Linux for imaging - Azure Virtual Machines | Microsoft Learn

If a custom kernel is required, we recommend a recent kernel version (such as 3.8+). For distributions or vendors who maintain their own kernel, you’ll need to regularly backport the LIS drivers from the upstream kernel to your custom kernel. Even if you’re already running a relatively recent kernel version, we highly recommend keeping track of any upstream fixes in the LIS drivers and backport them as needed. The locations of the LIS driver source files are specified in the MAINTAINERS file in the Linux kernel source tree:

F: arch/x86/include/asm/mshyperv.h

F: arch/x86/include/uapi/asm/hyperv.h

F: arch/x86/kernel/cpu/mshyperv.c

F: drivers/hid/hid-hyperv.c

F: drivers/hv/

F: drivers/input/serio/hyperv-keyboard.c

F: drivers/net/hyperv/

F: drivers/scsi/storvsc_drv.c

F: drivers/video/fbdev/hyperv_fb.c

F: include/linux/hyperv.h

F: tools/hv/

The following patches must be included in the kernel. This list can’t be complete for all distributions.

- ata_piix: defer disks to the Hyper-V drivers by default

- storvsc: Account for in-transit packets in the RESET path

- storvsc: avoid usage of WRITE_SAME

- storvsc: Disable WRITE SAME for RAID and virtual host adapter drivers

- storvsc: NULL pointer dereference fix

- storvsc: ring buffer failures may result in I/O freeze

- scsi_sysfs: protect against double execution of __scsi_remove_device

also had found funny thing:

I can successfully install ipfire, but only installing via console=ttyS0,115200n8. it seems something really messed with modules.

Then the issue seems to be the graphic card/ graphic card modules.

Do you have any other options as driver or settings for VRAM?

Hi,

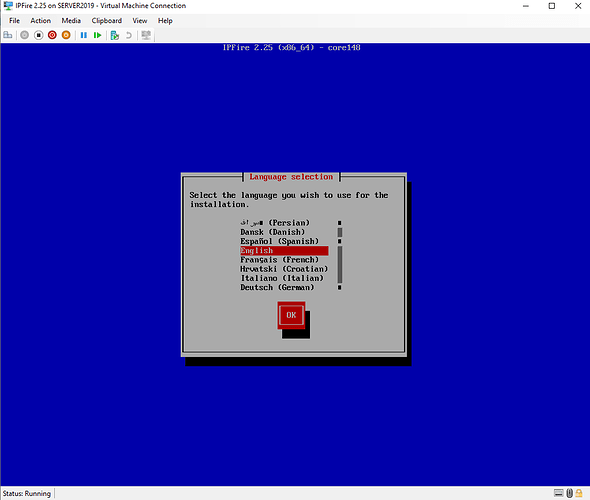

I’m having the same issu:

Hyper-V on Windows Server 2019

ipfire-2.23.x86_64-full-core139.iso

Second generation Virtual Maschine with Secure Boot diabled

On the initial screen (GRUB) I can use the keyboard but after selecting “Install IPFire 2.23 x86_64” on the Language selection screen no input is possible. This also affects sending the ctrl-alt-del from Hyper-V:

[Window Title]

Virtual Machine Connection

[Main Instruction]

Could not send keys to the virtual machine.

[Content]

Interacting with the virtual machine’s keyboard device failed.

The operation cannot be performed while the object is in its current state.

[Close]

It seems as if Ubuntu has had some simular issues in the beginning of Hyper-V second generation Virtual Maschines: https://bugs.launchpad.net/ubuntu/+source/linux/+bug/1285434

Till now I’m using a gen1 VM but would like to set up a new one on gen2.

Kind regards,

Markus

Hi there IPFire community,

I tried x86_64 on Core 151 Hyper-v 2016 Gen 2 VM no secure boot and i’m also stuck on langage selection with no keyboard.

I also have to mention that i586 Core 148 still support multiple virtual cpu as Core 149 does not boot with 2 CPU - error: “CPU 1 not responding for 23s”

It’s booting with 1 CPU.

Does anyone has positive experiences on x86_64 Gen 2 VM or i586 multiple CPU post Core 148 with hyper-v

Hello Jim,

welcome here.

I have been trying to repeatedly get access to one of the affected Hyper-V hosts. I do not have access to one myself except Azure and therefore nobody of the team could ever reproduce this and test this.

You should not use a 32 bit installation in a virtual environment. What is your reason to try that?